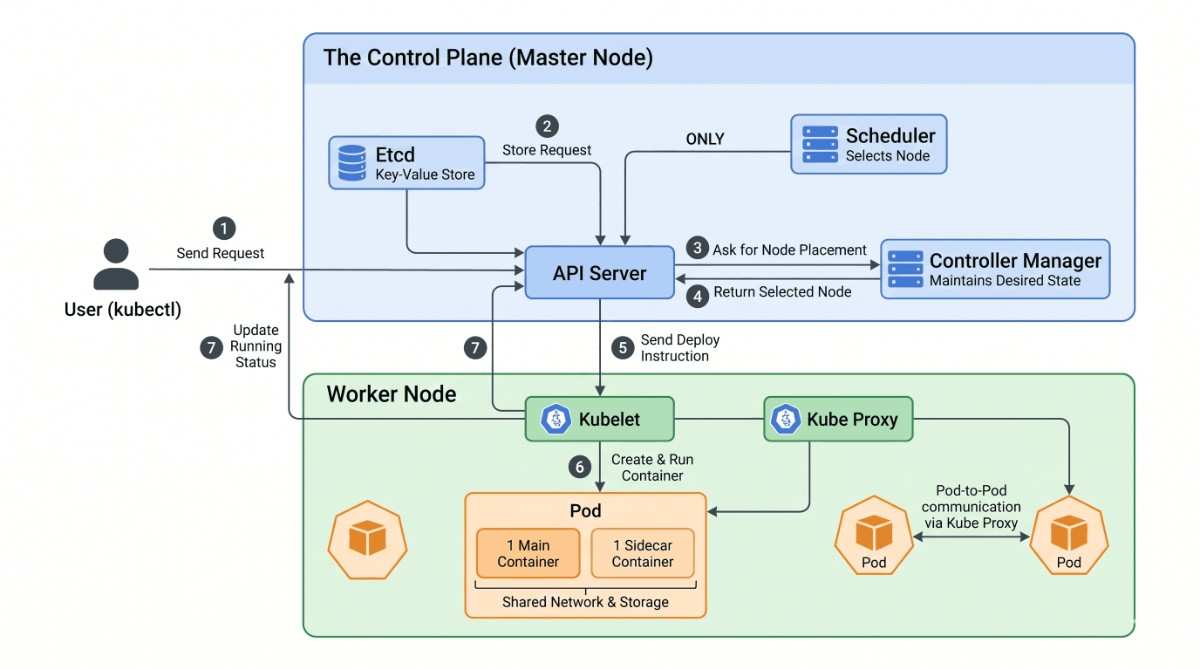

Kubernetes Architecture is the industry standard for container orchestration, but its Kubernetes Architecture can initially feel complex. To understand how it works, you only need to look at two main components: the Control-Plane, the brain that makes decisions, and the Worker-Nodes, the machines that do the actual work.

Kubernetes Architecture – The Control Plane (Master Node)

The Control Plane is the management layer of your Kubernetes Architecture cluster. It does not run your application workloads; instead, it dictates how, when, and where they should run.

- API Server (The Central Hub): This is the gatekeeper of the cluster. Every single request, whether from an engineer or an internal system component, must go through the API Server. It authenticates, validates, and routes all instructions.

- Etcd (The Source of Truth): This is a highly available, schema-less key-value database. It holds the absolute, real-time state of your cluster. Crucially, only the API Server is allowed to communicate directly with Etcd.

- Scheduler (The Decision Maker): When a new application needs a home, the Scheduler evaluates the resource requirements (such as CPU and memory) and finds the most appropriate Worker Node for the job.

- Controller Manager (The State Maintainer): This component constantly runs in the background. It monitors the “desired state” of the cluster and compares it to the “actual state,” stepping in to fix things automatically if a system crashes or goes offline.

Kubernetes Architecture – The Worker Nodes

Kubernetes Architecture Worker Nodes are the virtual or physical machines where your applications actually live and execute.

- Kubelet (The Executor): Think of this as the primary agent installed on the node. It takes direct orders from the API Server and ensures the required containers are running and healthy.

- Kube Proxy (The Networker): This component manages the underlying networking rules, allowing different applications to communicate with each other and handle internal web traffic.

- The Pod: The smallest deployable unit in Kubernetes. Kubernetes does not run containers directly on a node; it encapsulates them in a Pod. This acts as a shared environment for the main application container and any optional helper containers to share network and storage resources.

The Kubernetes Architecture Workflow:

Understanding the structure is helpful, but seeing the components interact makes the system truly clear. Let’s trace the exact, strict sequence of events when an engineer deploys a new application to the cluster.

Here is exactly what happens behind the scenes during a deployment:

- The Request: An engineer submits a deployment request to the cluster via a command-line interface or dashboard. This request goes directly to the API Server.

- Recording the Desired State: The API Server validates the request and immediately writes it into the Etcd database. The cluster now knows the new Pod is desired, but it does not yet exist.

- Seeking Placement: The API Server alerts the Scheduler that a new Pod requires placement.

- The Decision: The Scheduler reviews the available Worker Nodes, selects a suitable machine with enough compute resources, and reports this decision back to the API Server.

- Sending the Instruction: The API Server reaches out to the Kubelet running on the selected Worker Node and instructs it to deploy the Pod.

- Execution: The Kubelet pulls the necessary container images from the registry and spins up the Pod.

- Status Update: Once the container is successfully running, the Kubelet sends a confirmation status back to the API Server.

- Updating the Source of Truth: The API Server logs this final, running status into Etcd.

The loop is closed. The application is now live, and the actual state of the cluster perfectly matches the desired state.

ALSO READ:

- Secure Password Management in PowerShell Using Encrypted Credentials 2026

- Linux Server Health Checks Dashboard: Build a Powerful Monitoring Tool 2026

- AWS S3 Backups with This Efficient Shell Script

- Bash Brackets Explained in Simple Words (With 8 Examples)

Click here to go to the GitHub repos link